Written by Jonathan Brun, CEO of Nimonik

As someone born in 1983, I grew up in the defining era of piracy — videogames, music, television, movies — you name it, we pirated it. Today, we are entering a new age of “piracy” with powerful AI tools that have sucked up almost all human written and visual creations, including art, technical documents, movies, and more. Nimonik works with many publishers of copyrighted material who are working to adapt to this new reality. In this post, I wanted to share a few thoughts and ideas with our community.

A recent research paper by Stanford scientists entitled “Extracting books from production language models” highlights areas of concern for holders of copyrighted material. The paper describes how researchers were able to extract verbatim text from a variety of public models. Some models required more effort than others to have them produce the copyrighted works and certain models had to be “jailbroken” to extract the information.

The message that we retain from this paper is simple. With enough effort, safeguards can be bypassed and the underlying models will cough up copyrighted material. The paper quite explicitly states that its focus is not the copyrighted material, but rather the technical elements of protecting the contents of a model.

This paper potentially highlights two problems for standards development organizations.

Your Standards Are Already in AI Models

The standards that you have produced are likely in public models. Though most of the creators of these public models deny having the entire verbatim text of these standards in them, it is a bit difficult to believe given the quality of the responses you receive from Gemini, Chat GPT, etc. I can ask any model about a niche standard and receive very detailed responses. I encourage all publishers to validate what information models can share about their publications.

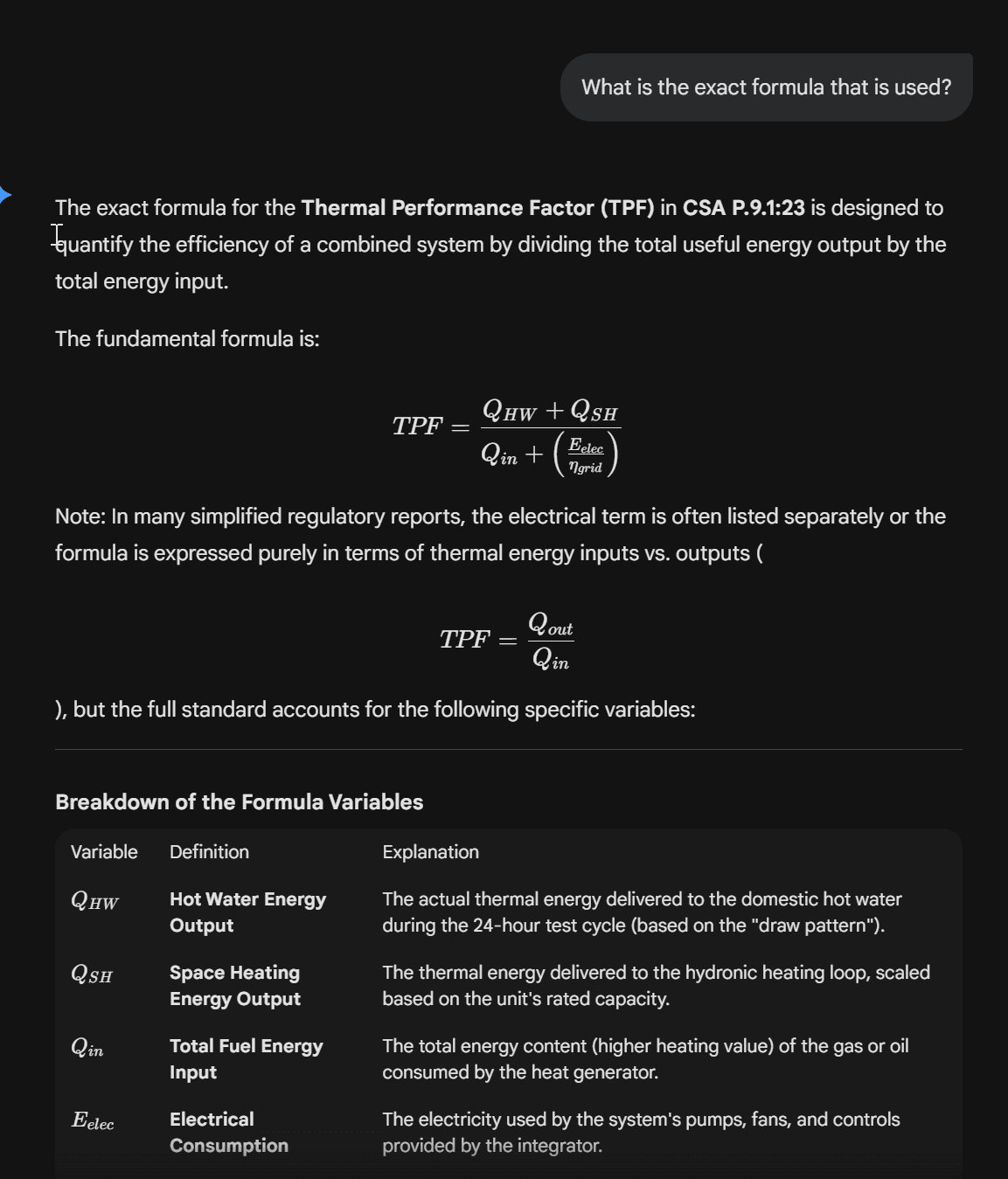

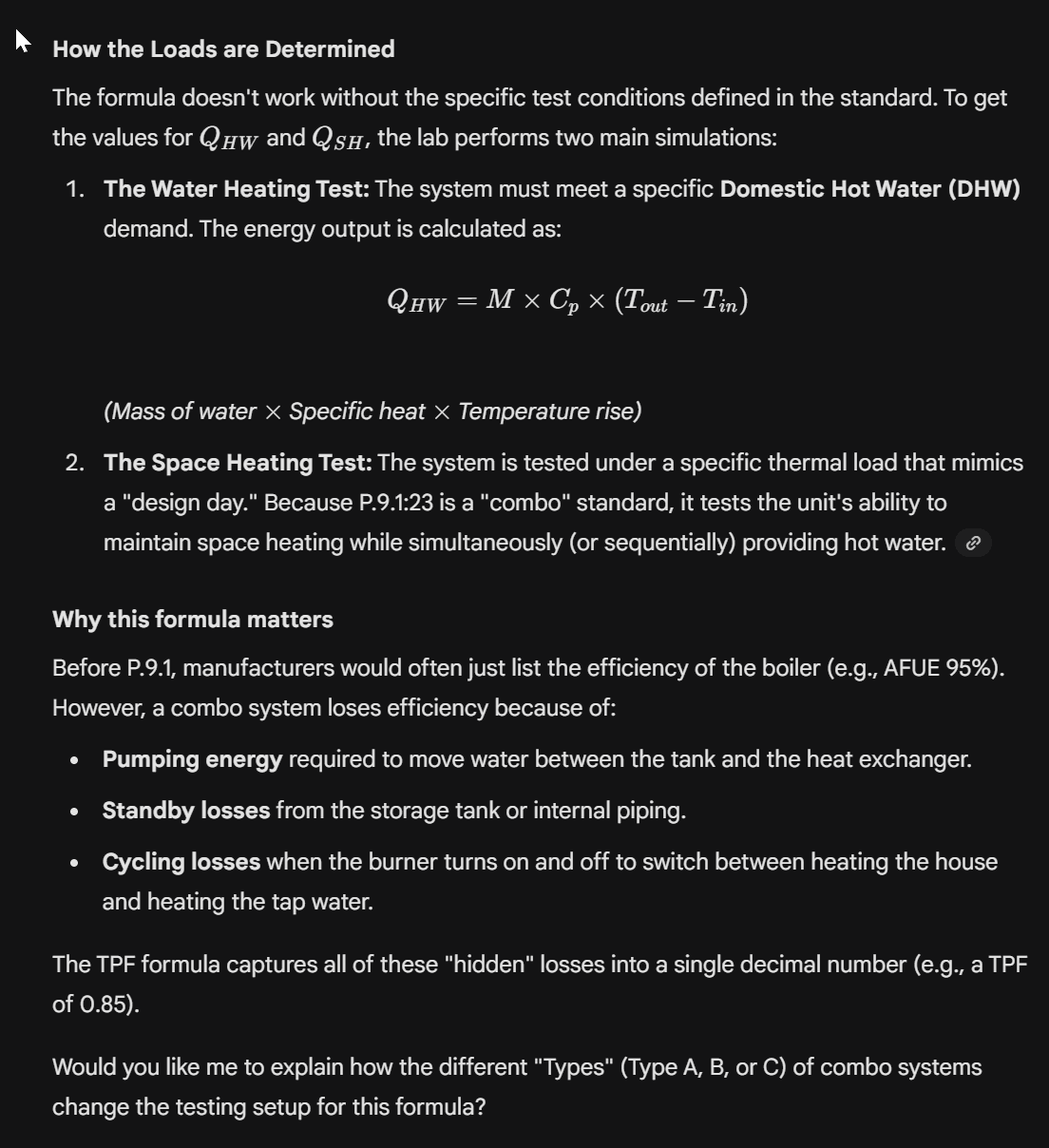

Question to Google Gemini: In CSA P.9.1:23, how is thermal performance calculated?

Extracts of the response:

As you can tell the answer is very specific and detailed. The AI will provide guidance and assistance to help you work through the calculation, the testing setup, and the inputs and outputs. Cross referencing with the actual standard, the response is accurate.

If the models have the entire standard in them, then – according to the paper cited above — they could theoretically be extracted from the model. A complete extraction of a technical publication from a public model would undoubtedly be copyright infringement, but the models have and will continue to put in place protections against the extraction of verbatim text. However, even if you cannot easily extract the entire text from a model, the fact that the model is storing and using the entire text is problematic for all publishers. Copyright holders have to deal with the fact that they are now competing against free versions of their own standards that are accessible in an interactive and compelling way.

Traditional Copyright Protection No Longer Works

Having personally grown up in the age of Napster, I vividly recall pirating music. There was an excitement among my high school friends that we could now download, share, and listen to any music we wanted — for free. I lived through the various efforts to curtail piracy: DRM, lawsuits, take-down notices, Apple Music, the iPod, and eventually streaming music services. As we documented in our white paper “Listen to the Music Industry: Lessons for Standards Publishers”, the music industry only killed piracy by offering a more compelling product than the pirates themselves.

The various efforts to stop piracy of music, software, books, and other digital content in the 90s and early 2000s all failed. The main reason they failed was twofold. Firstly, any digital good that is protected can be unlocked from its jail with enough effort. This is shown over and over again. Now, with this paper on extracting copyrighted works from public models, we see yet again that as soon as a good is in the world, it can be copied illegally by a sufficiently motivated party.

Secondly, so many digital goods were pirated because the user experience of the official digital goods was inferior to the pirated version. Pirated music was not only free, it was unlimited. You could have any album in seconds in a central location. Even today, many people pirate sporting events because purchasing subscriptions to the myriad of channels that carry various sports, from football to cricket to hockey to F1, is a byzantine labyrinth of logins, passwords, credit cards, interfaces and costly decisions. Convenience trumps morality more often than not.

While publishers of digital books have used Digital Rights Management for many years, this protection mechanism only works if the books are viewed as a single digital asset. A PDF that is protected is better than a watermarked PDF, but it remains a PDF. A PDF that is embedded in a public model is an entirely different problem.

While no serious engineer would rely entirely on an AI model of a standard, it seems probable that some people may opt for the free version that they can extract or query in these public models. This is especially likely for standards that are not critical to your organization or that you do not use very often. If the focus of technical publishers remains on traditional DRM and sharing of PDFs, they will likely fail to prevent “leakage” of their content via the public models that people are using every day.

Even if somehow publishers manage to limit the actions of public facing models, there remains the massive threat of internal corporate AI tools that suck up any document they are given. At Nimonik we hear customers asking if they can put standards into their internal corporate AI tools. Certainly other customers are not bothering to ask. If your content does not have DRM on it, I can most certainly say it is already being added to AI tools.

Conclusion

Artificial Intelligence and Large Language Models are a fast moving topic. The underlying technologies propelling AI forward along with the end-user tools and the corresponding copyright issues are rapidly changing with updates and court cases popping up daily. It can be overwhelming to keep pace. Personally, this time feels somewhat reminiscent of the emergence of the internet and online piracy in the 1990s. In many ways AI remains the Wild West and it is unclear where things will land when the dust finally settles.

Copyright holders need to focus on staying ahead of the curve and deploying a playbook that will mitigate the negative impacts of AI and focus on the potential positive outcomes. Our recommendation for copyright holders of technical publications is threefold:

- Apply DRM to all of your content everywhere.

Regardless of retail or subscription options or direct sales or sales via distributors, you should apply DRM to your content to prevent its sharing and insertion into third party AI tools. - Limit your distribution.

Only distribute your content to companies who meet strong IT security standards such as ISO 27001 and who have robust tools in place to keep your data safe. Both retail and subscription offerings should be done through secure platforms and secure partners. - Build new tools.

They say the best defense is offense. To combat AI, publishers of technical standards should partner with companies who can securely deploy AI tools on top of their content and ensure there is a strong alignment and royalty arrangement. A successful AI tool launch with a strong partner should lead to net new revenues of the copyright holder.

In the fast moving digital landscape, Nimonik is here to help technical publishers. Do not hesitate to reach out to us to discuss AI tools, security, and how to grow your organization in a sustainable way.